The Role of Attributes in Adding Context Beyond the Basic Label

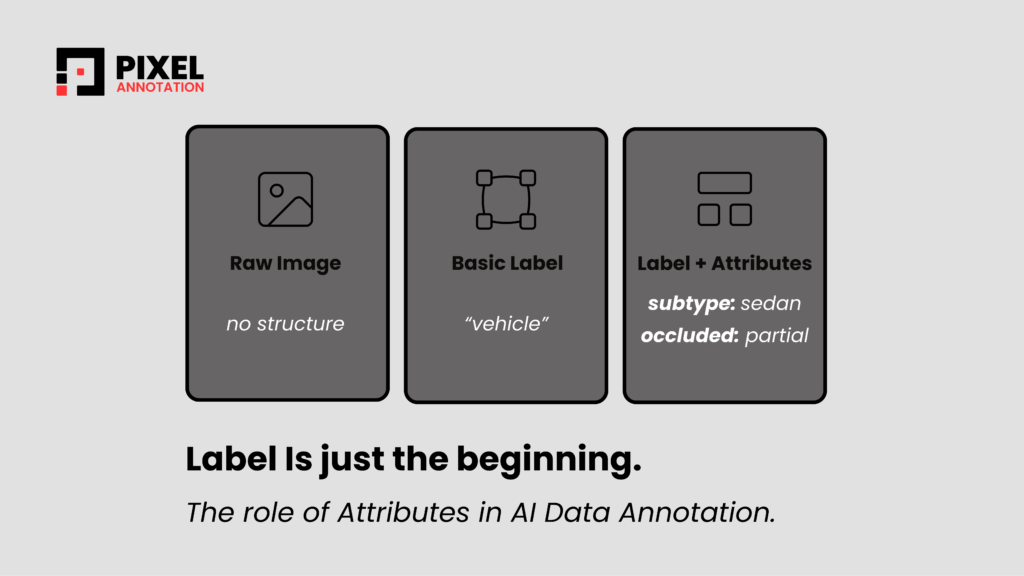

Every image annotation project begins the same way: someone draws a bounding box and assigns a label. “Car.” “Phone.” “Trash bin.” That label is the foundation — but in isolation, it is rarely enough to power a production AI model. The label tells the model what it is looking at. Attributes tell it what that thing actually means in context.

Leading image annotation companies have learned this lesson through experience. The difference between a dataset that trains a mediocre model and one that trains an exceptional one is almost never the number of images. It is the depth and consistency of the structured metadata — the AI annotation attributes, layered beneath every label.

What are attributes, and why do they matter?

In the context of AI annotation taxonomy design, attributes are structured fields that capture properties of an annotated object that a single label cannot convey. A label is a noun. Attributes are everything else: the sub-type, the condition, the material, the function, the context.

A well-designed attribute framework turns a flat list of labels into a rich, queryable knowledge structure — one that downstream models and applications can actually reason with.

Consider three very different annotation projects — waste classification, electronics detection, and vehicle recognition. Each uses labels at the top level. But it is the attributes beneath those labels that make the data genuinely useful.

| WASTE / GARBAGE | ELECTRONICS / DEVICES | VEHICLES / AUTOMOBILES |

| Recyclable Waste | Handheld Device | Motor Vehicle |

| Sub-Category: Plastic | Sub-Category: Smartphone | Sub-Category: Light Vehicle |

| Object Type: PET Bottle | Object Type: Touchscreen | Object Type: Sedan |

| Condition: Crushed | State: Screen-on | View Angle: Front-left |

| Contaminated: No | Orientation: Portrait | Occlusion: Partial |

In each case, a model trained only on the top-level label would have almost no ability to differentiate within those categories. The attributes are what make differentiation possible. A smart waste sorting system needs to know if a bottle is crushed and uncontaminated. An autonomous vehicle system needs the exact view angle and occlusion level of each detected car. A device recognition model needs to distinguish screen state and orientation.

This is precisely where the investment in attribute design pays off. Each additional attribute field multiplies the structured information in your dataset — turning a single annotation into five, six, or seven distinct data points that downstream models can query, filter, and train on.

The principle: classify by function, not form

One of the most common errors in attribute-based annotation is classifying based on what an object looks like rather than what it actually is. Two objects can share a nearly identical shape but belong in completely different taxonomy branches based on their real-world function.

A crushed plastic bottle and a clean one may look very different. A sedan photographed from the front and from the rear are the same object type — but if an annotator assigns different sub-categories based on visual shape alone, the dataset becomes inconsistent. Training annotators to ask “what is this used for, and where does it appear in the real world?” is one of the most important calibration exercises an image annotation company can run.

Conditional attributes: knowing when not to annotate

Not every attribute applies to every object. One of the most powerful design decisions in an ai annotation taxonomy is defining which attributes are conditional — only applicable under certain circumstances.

In a vehicle annotation project, “Licence Plate Visible” is only meaningful for on-road vehicles. In an electronics project, “Screen State” only applies to devices with displays. In a waste project, “Contamination Level” only applies to materials that can be contaminated. Forcing annotators to fill every field regardless of relevance leads to placeholder data that pollutes the training set. Conditional rules prevent this.

| Without conditionality | With conditional attributes |

| Every field filled for every object | Fields activate only when relevant |

| Placeholder values enter the dataset | “Not Applicable” carries real meaning |

| Annotators guess at irrelevant fields | Annotators follow clear decision rules |

| Dataset consistency breaks down | Dataset remains logically consistent |

How we ensure precise annotation

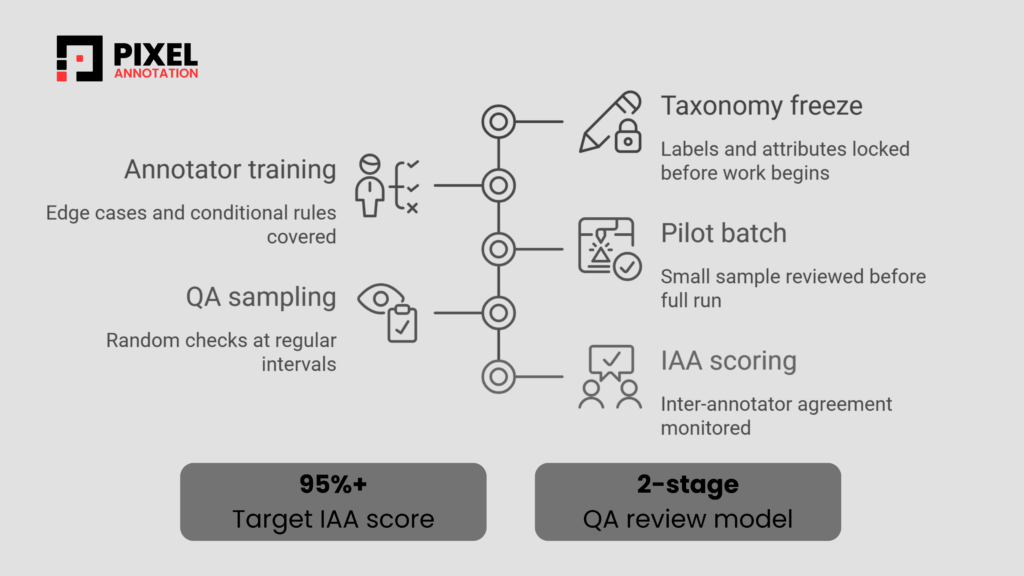

Building a rich attribute system is only half the challenge. The other half is ensuring every annotator applies it consistently, across thousands of images, over extended project timelines. Here is how a rigorous annotation workflow maintains precision at scale.

Quality standards we target

- 95%+ IAA score — inter-annotator agreement tracked per attribute field, not just per label

- 2-stage QA model — every batch passes both peer review and senior QA before delivery

- Zero mid-project taxonomy changes — taxonomy is frozen before work begins and never modified mid-run

- Pilot batch completed and calibrated before the full dataset run starts

- Edge cases are logged, reviewed, and added to annotator training materials

- Attribute distribution reports reviewed regularly to catch drift and skew early

Designing AI Annotation Attributes that scale

The best attribute frameworks are designed with the end model in mind. Before any annotation begins, the key question is: what decisions will the model need to make, and what information does it need to make them correctly? The answer should directly determine which attributes get defined, and which get left out.

Over-engineering attributes is as dangerous as under-engineering them. Too few attributes leave the model without the context it needs. Too many create annotator fatigue, introduce noise, and slow down throughput without meaningfully improving model performance. The right number of attributes is the minimum set needed to support the downstream use case.

The goal of a well-designed ai annotation taxonomy is not to capture everything about an object — it is to capture exactly what the model needs to know, and nothing more.

Attributes as the foundation of reusable data

One of the most underappreciated benefits of a well-built attribute system is reusability. A dataset annotated with rich, consistent attributes can serve multiple downstream tasks from a single annotation pass.

Vehicle images annotated with sub-category, view angle, occlusion level, and road context can train an autonomous driving model, a parking management system, and a traffic monitoring classifier — all without re-annotation. Waste images tagged with material type, condition, and contamination status can power both recycling robots and environmental compliance reporting tools. Electronics images annotated with device type, screen state, and orientation can support retail inventory systems, device repair triage, and consumer product recognition simultaneously.

This is the compounding return on investment that comes from getting ai annotation attributes right the first time. And it is what separates annotation work that ages well from work that has to be redone every time a new use case emerges.

Conclusion: the label is just the beginning

In AI development, it is tempting to measure annotation work by volume — how many images labeled, how fast, at what cost. But volume without structure produces datasets that are large and brittle. Models trained on them detect objects reliably in controlled conditions and fail unpredictably in the real world.

Attributes are what bridge that gap. They transform a flat list of labels into a structured, queryable representation of the world — one that reflects how objects actually behave, vary, and relate to their context. Whether you are building a smart waste management system, a vehicle recognition pipeline, or an electronics classifier, the depth of your attribute framework will determine how far your model can go beyond basic detection.

The investment is made upfront — in taxonomy design, annotator training, and quality processes. But it pays back many times over: in model accuracy, in dataset reusability, and in the confidence that every label in your training data means exactly what it is supposed to mean.

Precise annotation is not just a quality standard. It is a competitive advantage — for the models built on it, and for the teams that know how to deliver it.

We specialize in taxonomy-driven image annotation across computer vision domains — delivering training data that goes beyond the label and is built to power production-grade AI.

What we deliver:

- Multi-level taxonomy design and documentation

- Attribute framework tailored to your specific use case

- Rigorous QA with inter-annotator agreement tracking

- Domain expertise in vehicles, waste, electronics, and more

- Scalable annotation across large and complex datasets

- Zero mid-project taxonomy drift — consistent from image 1 to image 100,000

Get in touch to discuss your project requirements. No commitment required — we are happy to talk through scope and approach first.